Augmentation

Artificial Intelligence Revised, Part 1

I first considered the topic of artificial intelligence (AI) in an article I wrote in early 2023, a little more than a month after OpenAI released ChatGPT for general public use. Since then, general AI tools have been released by Anthropic, Meta, Alphabet, and X. Myriad AI applications have been made available as stand-alone special purpose applications or incorporated into existing applications like Notion, Canva, and many others. Although AI technology might still be considered a novelty by many, it is being incorporated rapidly into daily work by employees in many business entities. For this reason, it is worth revisiting the topic.

There are many individuals far more knowledgeable about AI than I am. It seems that a fair number of them have decided to cash in by marketing products and/or establishing themselves as social media influencers devoted to promoting their AI skills. My goal in writing this article, and a companion article that will appear next week, is more limited. I wish to provide a framework for considering AI as a means of discussing how it can be used, what are its limitations, and what are the ethical issues concerning its use.

I will reference particular products by name in this article. I am not compensated in any way by these companies. These references should not be interpreted as endorsements.

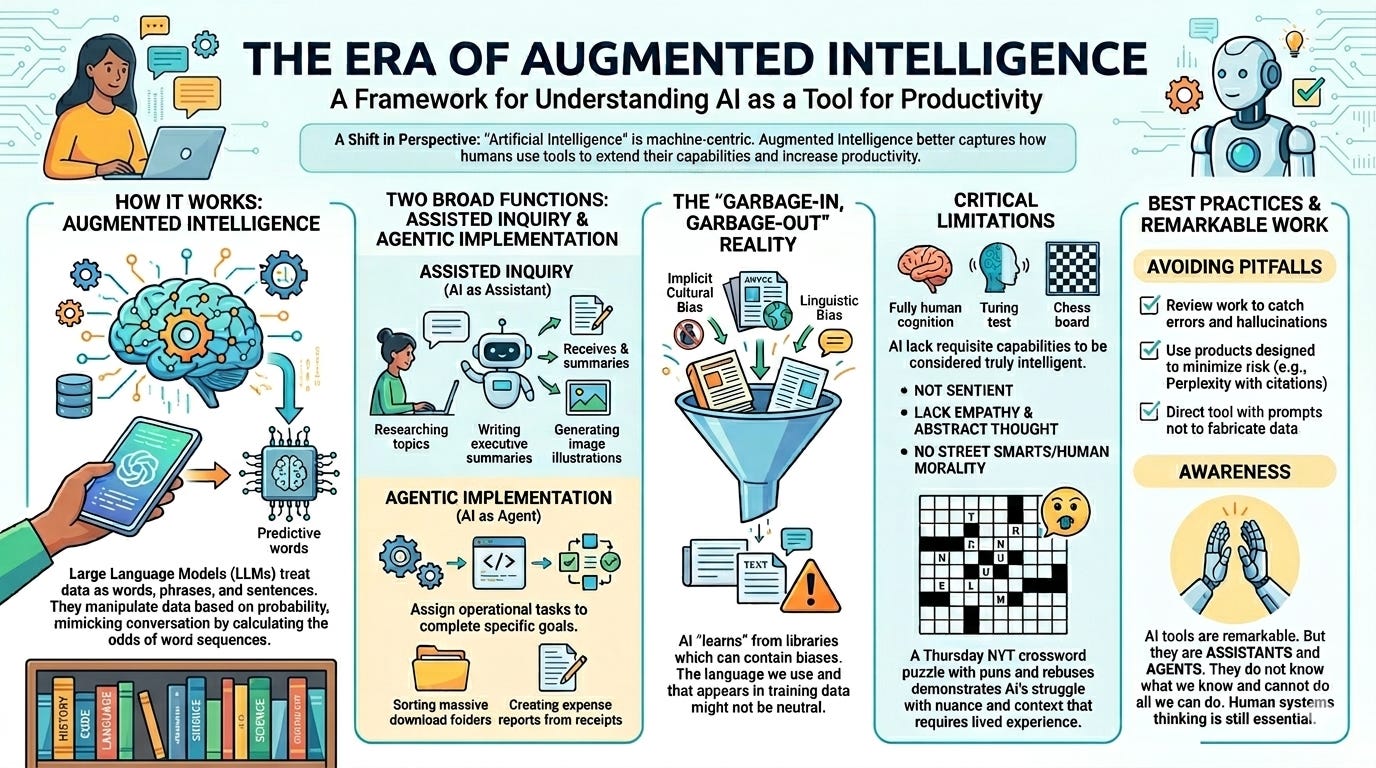

I begin by suggesting that “artificial intelligence” is not a particularly apt term to describe what we are using. The phrase originated in the mid-1950s when both computer science and neuroscience were in their infancy. Those initially interested in the topic were unlikely to have foreseen that computers would shrink from building-size machines to compact devices the vast majority of individuals carry around in their pockets. Neither did they possess our 21st century understanding of cognitive processes to assess what intelligence was and in what way machines could come to possess it. My specific critique of the phrase is that it is machine-centric. This focus is unhelpful if we wish to analyze the use of the technology by humans.

I suggest an alternative term that more adequately captures why individuals and the organizations within which they work use AI tools: augmented intelligence. As with other forms of technology, we are seeking to extend the capabilities of our bodies and minds to increase productivity beyond that which can be achieved in the absence of those tools. AI enables us to analyze more data more thoroughly and produce results in far less time that was previously possible.

Augmented Intelligence. What ChatGPT and other chatbots do is permit the use of natural language for accomplishing tasks for which humans were previously required. This was achieved through a machine learning process in which operators exposed software programs to a vast library of verbal content, training the software in achieving a level of fluency that allows it to simulate conversations. It is important to understand that the AI applications are not thinking in the way we think. Instead, they treat words, phrases, and sentences as data that can be manipulated based on probability. This Large Language Model approach mimics conversation by calculating the odds that, in a given context, one word will be followed by one word more often than another.

This process does not diminish the utility of AI. I suspect this training process differs from the way in which humans learn to speak only in terms of scale. We pick up words and phrases gradually over a lifetime. AI was fed this same content in a much compressed time frame.

There is an inherent issue associated with Large Language Models that is important to acknowledge. What computers “learn” from the libraries on which they are trained will contain the implicit cultural and linguistic biases found in these libraries. Users need to be aware that the garbage-in-garbage-out rule still applies. Further, language is not value-free. The terms we use, and that appear in books, articles, and social media posts, might not be neutral. Instead, they might well reflect power dynamics that we would wish our machines to avoid.

With this basic perspective of augmented intelligence, then, let us now consider what AI can do for us. These functions fall into two broad categories: assisted inquiry and agentic implementation. I chose these terms for a very specific reason. In the first, the tool functions as an assistant; in the latter, it functions as an agent.

Assisted Inquiry. When ChatGPT and its competitors were first released, they allowed users to type queries and responded with replies. Not long after, they could be asked to produce work products based on these responses or on documents uploaded by users. In this respect, it was as though I had an assistant working for me whom I could direct to research a topic and write an executive summary about it. Importantly, it could do these tasks more thoroughly and far more quickly that a human assistant could do the same work.

Let me offer a concrete example. I was employed as an interim manager by an organization that was searching for a full-time incumbent for the position. Recruitment included the preparation of a profile of the organization and the position. When it came time to conduct the interview, AI was used to suggest questions to be used in discussions with applicants. It completed this task in a fraction of the time it would have taken me.

As refinements in AI have occurred, so has the range of tasks that these tools can perform. I have been using one or more specialized tools for a few months to prepare the illustrations that appear at the top of the articles I write. I have also begun including an AI-generated infographic to summarize each article. Not only can these tools manipulate language, they can use their knowledge of images to produce output based on the prompts I give it. In one instance, I specifically asked for an illustration in the style of Edward Hopper. To achieve this result, it was necessary to “understand” the language of my prompt and to have been exposed to Hopper’s paintings.

AI tools have become increasingly sophisticated in a very short period of time in terms of the breadth of the output they are capable of producing. They are, then, very skilled assistants indeed. Of course, like all assistants, it is important to review their work to catch errors they might inadvertently or intentionally include in the output. The infographic included with last week’s article initially contained a made-up word. I had to direct the tool to reproduce the image after eliminating that non-word.

I read a news article a day or two ago in which it was reported that scientific papers have been published with fabricated sources cited. There have also been reports of attorneys’ briefs with case citations that do not exist. AI tools, like the most ambitious assistants, are motivated to produce results. This desire to complete the task can result in content that is fabricated, erroneous, or misleading.

With that in mind, there are two approaches that can be considered to avoid this pitfall. First, some products are designed to minimize this risk. Perplexity, for example, includes citations that can be checked to verify the accuracy of the information it provides. Second, prompts can include statements directing the tool not to make up information.

Agentic Implementation. There has been a significant advancement over the last few months in the utility of AI tools beyond the level of capable assistant. Agentic implementation allows the user to assign operational tasks to the AI tool to complete specific tasks.

There are two general approaches that are taken to achieve this. OpenAI’s Codex, Anthropic’s Claude Code, and OpenClaw, for example, allow the user to write programs to complete specific tasks. They might be instructed to read all emails that have arrived in an inbox during a particular period and prioritize them based on specified criteria. This capability has existed in the past for those skilled at opening a terminal window and using Python or another programming language. The difference today is that that the user can use natural language to describe what the goal is and let the AI tool write the code.

For those like me, for whom it has been a very long time since they wrote computer code, a true no-code alternative exists as well. Claude Cowork and Perplexity Computer are designed to make the coding part of the process invisible to the user. The user writes a prompt in natural language describing what needs to be accomplished and gives access to the folder or program so that the AI tool can do its work.

One example from Claude Cowork involves granting access to the downloads folder on a computer and asking the AI to sort the contents into various folders based on the type of file or other criteria. I need to do this on my computer but the sheer volume of files in the folder has caused me to postpone doing so. AI will not be so easily intimidated. In another example, images of receipts contained in a file are reviewed by AI which then produces an Excel spreadsheet expense report.

Agentic implementation, then, is delegating tasks to AI and turning it loose to do the work. Doing so means that users must have developed their own skills in the area of systems thinking in order to provide direction with regard to workflow. AI cannot do what it does not know how to do in the setting in which it is required to do the work. In this respect, it is not different that an organization’s new hire. This is but one of the limitations of AI of which executives in particular must be aware.

Augmented Intelligence Limitations. As skillful as AI tools are in imitating human conversation, they lack requisite capabilities to be considered intelligent. Computers might be able to beat chess grandmasters or pass a Turing test, but they do not have all the cognitive abilities we associate with being fully human. They are not sentient. Neither have they had the life experiences typical of humans that allow for the development of interpersonal traits such as empathy. They lack the capacity for abstract thought and critical thinking because they have not been engaged in person-to-person interactions through which we develop these skills. They do not have street smarts because they have not lived life on the street.

It is for that reason that I illustrated this article with a computer attempting to complete a crossword puzzle. Enlarge the illustration and you will see that random letters are used to fill some of the blanks. Completing a New York Times crossword puzzle, something I do several days each week, requires more than a large vocabulary. One must be able to understand that a clue might make use of a pun or be worded in an intentionally misleading way. On Thursdays, the puzzle might include rebuses or make use of other techniques that the solver must discover in the course of play. In some instances, nonsense words are the right answers but only within the context of the puzzle. Knowledge of more than the contents of a dictionary is needed to complete it. The solver must have lived.

These limitations should be considered defects only if we expect AI to do more than it is capable of doing. I do not wish my remarks to be perceived as denigrating the work of software developers who have produced these products. What they have accomplished is remarkable. We need only be aware that AI tools are assistants and agents. They do not know what we know and cannot do all we can do.

One of the things they cannot do, because they have not been socialized as humans have, is to act in ways that reflect human morality. Next week, I will explore this limitation further by considering ethical considerations associated with the use of artificial intelligence.

Illustrations produced using Google Gemini.